Blog

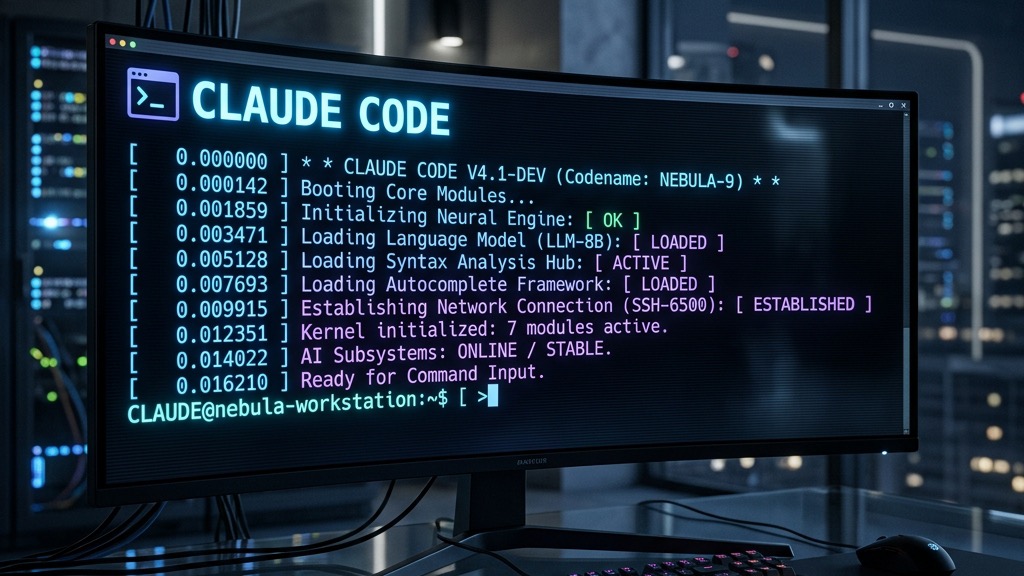

From Keystroke to Interactive REPL: An Architecture Deep Dive into Claude Code’s Boot Sequence

When you type claude into your terminal, there is a highly sophisticated Claude Code boot sequence that springs into action before...

Building Intelligent Conversational Agents with LangGraph: A Tutorial Guide

Creating sophisticated conversational agents requires more than just a powerful language model. You need a framework that can manage complex...

Crafting a Scalable Real-Time Interaction System with Redis: A Deep Dive into Integrating Character, Environment, and LLM Services

Understanding the Problem 🎮 What is the System? This system is designed to manage real-time interactions within a virtual environment, where...

Automating Postgres and pgvector Setup with Docker

When it comes to managing databases in development environments, Docker is a lifesaver for many developers due to its simplicity and isolation...

Gemma: Google’s New Family of Open AI Models for Text Generation

Google’s Vision for Accessible AI Google has unveiled an exciting new venture in the realm of artificial intelligence: Gemma. This innovative...

Nomic vs OpenAI Embeddings

In the world of text embeddings, the Nomic vs OpenAI Embeddings debate marks a pivotal shift towards open-source alternatives. We stand on the brink...

Sora: Text-to-Video Breakthrough

In the ever-evolving world of artificial intelligence, the bridge between imagination and reality is being crossed in more innovative ways than ever...

Exploring Lumiere: Google’s Astonishing Leap in AI-Driven Video Creativity

Hey there! Have you heard about Lumiere? If not, you’re in for a treat. Google’s latest marvel, Lumiere, is turning heads in the world of AI and video...

Revolutionising AI with Federated Learning: A New Era of Secure and Diverse Data Usage

Introduction: The landscape of artificial intelligence (AI) is evolving rapidly, and with it, the methodologies for training machine learning models...

Building a Retrieval Augmented Generation (RAG) System: Harnessing AI for Enhanced Information Retrieval

Introduction In the realm of artificial intelligence and natural language processing, Retrieval Augmented Generation (RAG) systems stand as a beacon...

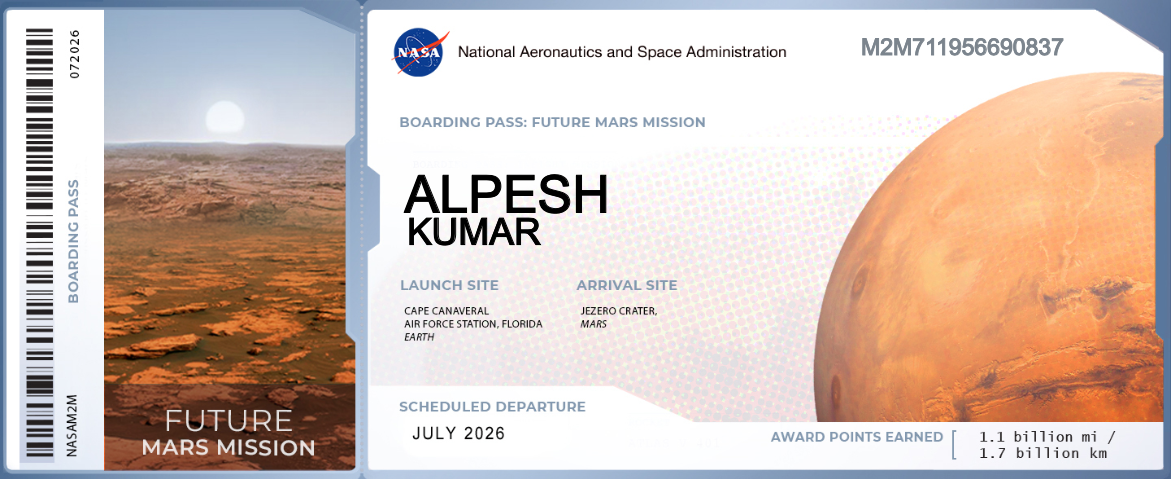

Jezero Carter, Mars boarding pass : Future Mars Mission

Have you signed up yet to send your name to Mars on a future NASA mission and get a free “boarding pass?” If not, join more than 22...

Introduction to Web Scraping with Python

Web-scraping is an vital strategy, as often as possible utilised in...